July 17, 2018

Written by Carolyn Keating

It’s one of the most memorable scenes in Star Wars: The Empire Strikes Back: Luke Skywalker gets his hand lightsaber-ed off by Darth Vader. But all is not lost for our hero; he receives a new artificial hand that looks and performs just like his old one. While this advanced technology may once have been thought of as science-fiction, today it is becoming reality.

Normally, the brain interacts with the outside world by sending commands through peripheral nerves, neurons outside the brain and spinal cord that cause the muscles of the body to move; for instance, you might reach out and grab a cup. Brain-computer interfaces (BCI), also called brain-machine interfaces or direct neural interfaces, bypass the peripheral nerves and muscles, and allow the brain to communicate directly with the environment; for instance, your brain might cause a robotic arm to reach out and grab a cup.

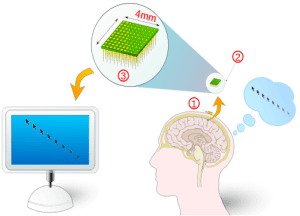

There are many different kinds of BCI systems, but they’re all based on the same general idea. Brain signals are detected and then processed and decoded. The decoded brain signals are then translated into a signal that controls the BCI output (such as a robotic arm or computer screen cursor). Depending on exactly how the brain signals are detected, patients learn to associate generation of particular types of brain activity with the desired BCI output1. For example, a patient might use a BCI that detects activity in the somatosensory and motor cortex regions of the brain.

Cells in this region fire at different intensities or rhythms when someone is preparing to move or imagining movement. With BCI, these changes in firing can be coupled to the movement of a cursor on a computer screen, and the patient learns to alter the intensity or rhythm of their sensorimotor firing to move the , with the help of feedback from the computer about whether they’ve been successful (Figure 1). Note that it’s not exactly mind-reading; patients find that mental imagery can help them learn how to control their neuronal activity, but to control a computer cursor they can imagine moving a variety of body parts, and as they get better at the training over time they don’t need imagery at all.2

While sensorimotor rhythm modulation is one type of BCI, there are many kinds of devices. One major distinction between the different systems is whether they are invasive or non-invasive. Invasive systems involve surgically implanting electrodes or grid of electrodes either on the surface of the brain beneath the skull, or deeper into the grey matter. An advantage is that the recorded brain signals are very clear, and the electrodes can be places in specific regions of interest. On the downside, placement does require brain surgery(!), and over time often results in the formation of scar tissue around the electrodes that can block the signals. In contrast, non-invasive systems require no surgery, and instead collect brain signals from the external surface of the scalp, using equipment like an EEG. While this method is easy and painless, the skull blocks and distorts some of the brain signals before they can reach the scalp.

Regardless of how the brain signals are recorded, the results of harnessing this neural communication and linking it to an external device are pretty spectacular. Already, BCIs are changing the lives of many disabled people and allowing them to experience the world in a way they were previously unable to. For example, a quadriplegic woman is now able to control a robotic arm with her thoughts. A BCI can control a robotic exoskeleton to help those with paralyzed legs walk (see video in Figure 2).

So far we’ve talked about BCI as a means for a brain to send information out to a device, but it’s also important for the brain to receiving incoming information from the outside world. If you think about when you pick up something heavy and sturdy like a book, versus when you pick up something light and fragile like an egg, you’ll notice that you apply different pressures and fine movements depending on the object. Your brain receives sensory information about what you’re holding and adjusts the movements of your hand accordingly. People using robotic body parts need that same kind of information, so in addition to sending motor commands out of their brains, their neurons need to be able to take in sensory information from a computer as well, which can be a lot more challenging for scientists to reproduce.

Despite these challenges, sensory BCIs currently exist that help blind people see by translating images from a video camera to stimulation in the brain’s visual regions, resulting in the formation of images. And although not technically a BCI, devices for blind people exist that translate images seen on a video camera to electrical stimulation on the tongue, which users can learn to “read.”

BCI isn’t just limited to helping people with disabilities, however. Increasingly, tech companies are interested in developing BCI for use in video games, or to enhance the lives of non-disabled people. For instance, Facebook is working on a device that will allow people to type by thinking. And Elon Musk’s Neuralink venture (explained here as a “wizard hat” in Parts 4 and 5 of the article) aims to detect signals from a billion neurons and send that data wirelessly to computers, the cloud, or other people with these devices installed; you could have a conversation with someone just by thinking at them!

While exciting, the explosion of BCI technology for health, entertainment, and enhancement has brought with it a host of ethical considerations. One issue includes the idea of responsibility: that actions could be triggered by subconscious or passing thoughts that the user had no intention of actually carrying out. How would the legal system deal with these consequences? Privacy and security are also major concerns. What if someone’s BCI could be hacked into so that they are no longer in control? Or what if creators of these devices keep track of user information such as psychological traits and mental states, creating a real-life Westworld (or something similar to the recent Facebook privacy scandal)?3

Future BCI efforts will hopefully be able to move forward with these ethical considerations in mind. As technology advances, restoring function for disabled patients, or even augmenting the function of “normal” people, will stop being the exception and instead become the rule.

References

- Chaudhary, U., Birbaumer, N. & Ramos-Murguialday, A. Brain-computer interfaces for communication and rehabilitation. Nat. Rev. Neurol. 12, 513–525 (2016).

- Kübler, A., Nijboer, F., Mellinger, J., Vaughan, T.M., Pawelzik, H., Schalk, G., McFarland, J.Dd, Birbaumer, N., & Wolpaw, J.R. Patients with ALS can use sensorimotor rhythms to operate a braincomputer interface. Neurology 64, 1775-1777 (2005).

- Burwell, S., Sample, M. & Racine, E. Ethical aspects of brain computer interfaces: a scoping review. BMC Medical Ethics. 18, 60 (2017).

Images

Figure 1 from Balougador via Wikimedia Commons, CC BY-SA 3.0 https://commons.wikimedia.org/wiki/File:InterfaceNeuronaleDirecte-tag.svg.

Figure 2 from A lower limb exoskeleton control system based on steady state visual evoked potentials No-Sang Kwak, Klaus-Robert Müller and Seong-Whan Lee Journal of Neural Engineering. A brain-computer interface for controlling an exoskeleton. CC BY SA 4.0.