June 7, 2022

Written by: Omer Zeliger

Neural networks are everywhere. In fact, you’ve probably used one today without even thinking about it. From waking up an iPhone with “Hey Siri!”, to reading text from handwriting with optical character recognition, to searching for recipes on Google1,2,3 neural networks are integrating seamlessly into our daily lives.

Neuroscience is no exception to this trend. Much like Google and Apple, neuroscientists often use neural networks to assist in data analysis for research. For example, a software called DeepLabCut uses neural networks to automatically track an animal’s position in space, saving researchers who study animal behavior from having to manually record animals’ movements over hours of video4. On the other hand, neuroscientists have one additional unique use for neural networks: directly modeling how real neurons interact with each other to test hypotheses about the brain.

Neural networks as models of the brain

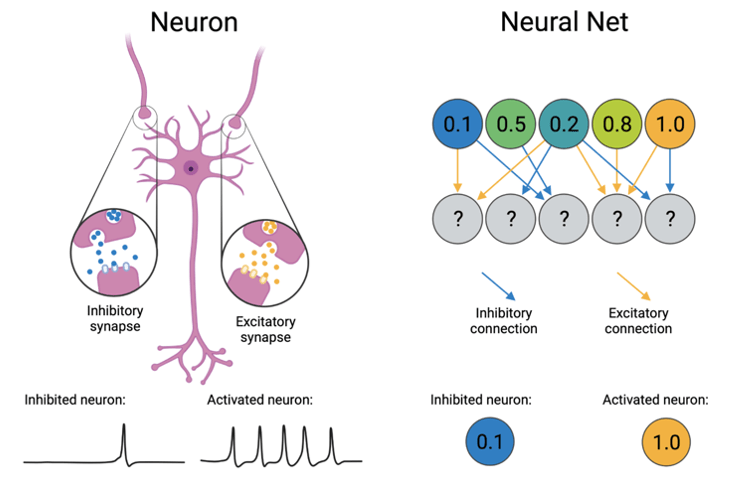

Computational neural networks were originally inspired by how neurons work in the brain. Instead of using real neurons, these networks function by building collections of mathematical “neurons” that can fine-tune their activity in order to perform a single task better and better. Each network is made up of hundreds of interconnected neurons that share two important properties with real neurons: activation and connections. Whereas real neurons fire action potentials when they are active, artificial neurons represent their activation as a number, often between 0 and 1. Once activated, both types of neurons pass along that information to their neighbors. Real neurons connect to each other through synapses where they can pass chemical messages to one another after every action potential, while artificial neurons can directly pass information to each other through mathematical equations. In both real and artificial networks, these connections can be either inhibitory, where the receiving neuron reduces its activation, or excitatory, where the neuron increases its activation. See figure 1 for a side-by-side comparison of neurons and neural nets.

Oft-modeled neurons: entorhinal grid cells and how they are modeled

Thanks to these similarities, researchers can create specialized neural networks that represent specific regions within the brain to better understand how those areas function. One area that has been extensively modeled is the entorhinal cortex. The entorhinal cortex is involved in spatial navigation and has neurons that are activated depending on where in space an animal is located5. There are several subtypes of these neurons in entorhinal cortex that represent different kinds of information about where the animal is in the environment. For example, border cells respond when an animal is standing near a wall, head direction cells fire when the animal is facing a particular direction, and grid cells activate when the animal stands in one of several spatial locations that together make up a triangular grid. Figure 2 shows an example of how standing in different locations might influence the firing rate of a single entorhinal grid cell.

There’s a lot we still don’t know about grid cells. For example, we don’t know exactly how these cells form their namesake grid pattern and there’s still some debate about what grid cells do in the brain. Most hypotheses about their function revolve around the idea that grid cells help us keep track of where we are in space5. Two competing theories for how grid cells know an animal’s location is that they use visual input to continuously orient themselves6, or that they keep track of an animal’s moment-to-moment speed to estimate its position over time7 – if a mouse leaves a train station 40 miles west of Philadelphia moving north at 75 miles per hour, does it need its eyes to tell it where it is?

The grid cell attractor model, first proposed by Alexis Guanella and Paul Verschure in 2007, incorporates anatomical features of real grid cells to translate this second idea into a functioning neural network8. In doing so, it proves the feasibility of real neurons using similar techniques. To get a better idea of how scientists come up with these models and understand how their building blocks can be inspired by actual cells, let’s break down the attractor model into its individual components. Keep in mind that while real grid cells have more connections and complexity than this, the basic attractor model picks and chooses only those that are absolutely necessary to create a functional neural network.

The attractor model is built of a two-dimensional network of grid cells, each of which receives three types of input9. First, every cell receives constant excitation representing input from a neighboring brain region, the hippocampus (which has been proven to be necessary for real grid cells to function10). Second, to represent input from the aforementioned entorhinal head direction cells, every artificial grid cell has a preferred motion direction, where it receives extra excitation when the animal is moving in that specific direction. Lastly, if a cell is active, it inhibits its neighbors in a donut shape, where the donut is shifted slightly in its preferred motion direction. This mimics a common pattern of inhibition in the brain called lateral inhibition where neurons inhibit nearby neurons of similar types. To get a look at how this all fits together, see figure 3 for a visual representation. Altogether, this leads to clusters of active cells that are stable when the animal is staying still but slide around the 2D grid cell network when the animal is moving. If one particular grid cell is active when the animal stands at a specific location in space, moving will cause that activity cluster to slide away. If the animal moves to a different location where that cell is active, another activity cluster will slide in to take its place. Click here to see the attractor model in action.

What they’ve done and where they’re going

Even though neural networks might manage to replicate patterns of activity we see in the brain, this alone isn’t sufficient evidence to prove that the brain really works that way – we still don’t know for sure if the attractor model accurately depicts how real grid cells keep track of an animal’s location. Still, that doesn’t mean they can’t be useful to understanding real neural circuits. For example, researchers can modify neural network models by adding and taking away connections between neurons based on similar connections found in the brain area the model is based off of. By looking at what the model does when those connections are and aren’t present, they can make predictions about what those connections do in real brains9. Neural networks can also help rule out some possibilities by showing that they require properties that the real neurons in the circuit don’t have7. In the best-case scenario, these models can even inspire new techniques for building artificial intelligence by transforming the brain’s problem-solving methods into computer-useable code11.

Neural networks are a fantastic tool for modeling how real neurons interact with each other, and they have only gotten more powerful with recent technological advances. They let researchers answer questions while minimizing animal involvement, and grow symbiotically with advances in artificial intelligence. In the coming years, hopefully we’ll see these networks expanded to model more areas with more nuance as they teach us more about the brain.

References:

- Metz C. “AI Is Transforming Google Search. The Rest of the Web Is Next”. Wired, 4 February 2016.

- Thomas A. “An inside look into state-of-the-art Image and Optical Character Recognition”. Medium, 12 February 2021.

- Siri Team. “Hey Siri: An On-device DNN-powered Voice Trigger for Apple’s Personal Assistant”. Machine Learning Research at Apple, October 2017.

- Mathis A. et al. (2018). DeepLabCut: markerless pose estimation of user-defined body parts with deep learning. Nature Neuroscience 21:1281–1289.

- Moser M, Rowland DC, Moser EI (2015). Place Cells, Grid Cells, and Memory. Cold Spring Harb Perspect Biol 7(2):a021808.

- Chen G, Manson D, Cacucci F, Wills TJ (2016). Absence of Visual Input Results in the Disruption of Grid Cell Firing in the Mouse. Current Biologyl 26(17):2335-2342.

- Schmidt-Hieber C & Häusser M (2013). How to build a grid cell. Philosophical transactions of the Royal Society of London 369(1635):20120520.

- Guanella A & Verschure PF (2006). A model of grid cells based on a path integration mechanism. International Conference on Artificial Neural Networks pp. 740-749.

- Kang L & Balasubramanian V (2019). A geometric attractor mechanism for self-organization of entorhinal grid modules. Elife 8:346687.

- Bonnevie T et al (2013). Grid cells require excitatory drive from the hippocampus. Nature Neuroscience 16(3):309-317.

- Pötzsch M, Krüger N, von der Malsburg V (1996). Improving object recognition by transforming Gabor filter responses. Network 7(2):341-347.

Cover image by LoggaWiggler via Pixabay

Figure 1 created with BioRender

Figures 2 and 3 created with PowerPoint

Leave a comment